URBANCLIPATLAS: A Visual Analytics Framework for Event and Scene Retrieval in Urban Videos

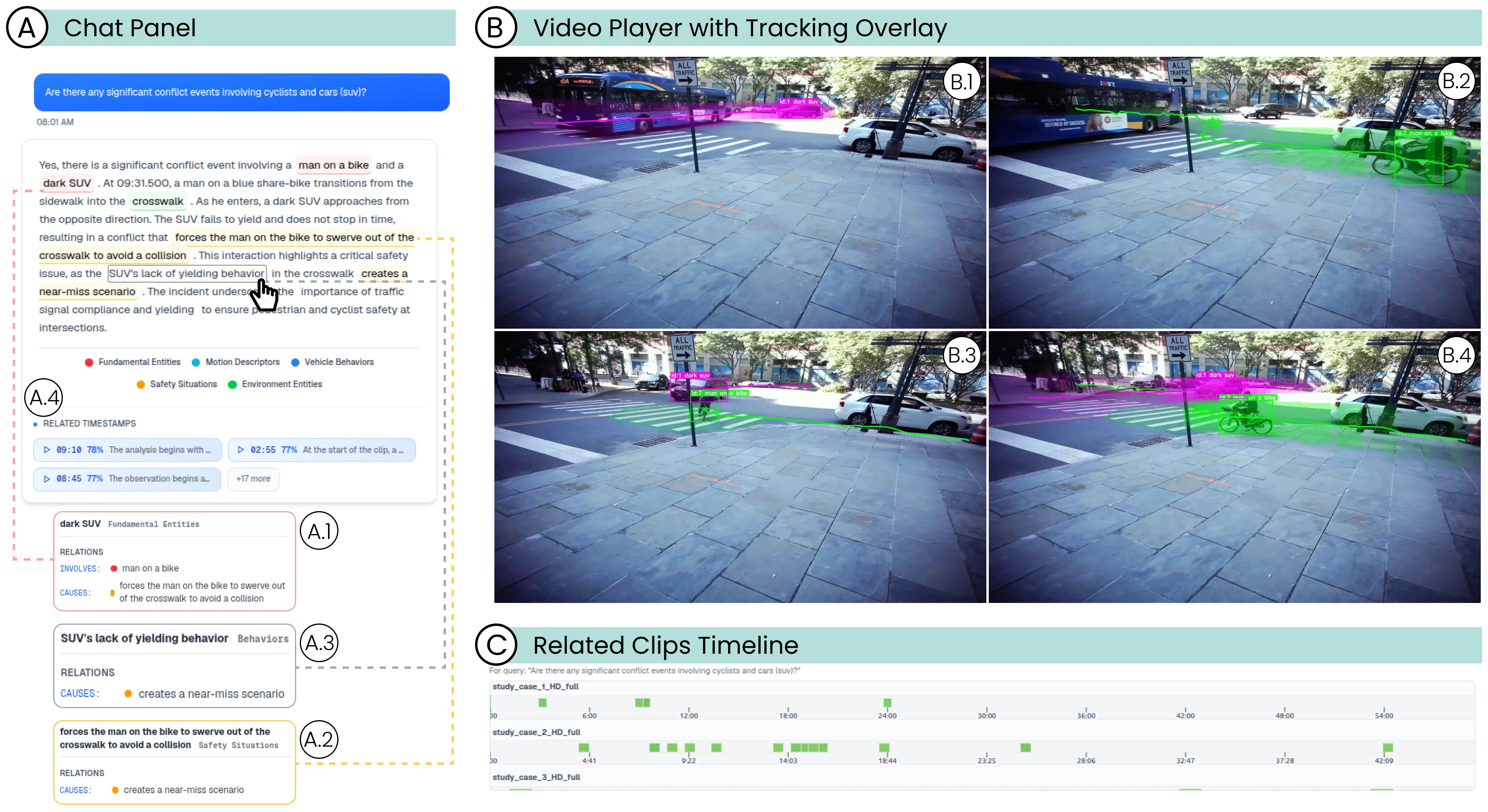

URBANCLIPATLAS interface. (A) The Chat Panel displays the user's query, the RAG-generated narrative answer, and entity-level tooltips linked to the knowledge graph. (B) The Video Player with tracking overlays shows the current frame with dynamic entities and highlighted static layout elements. (C) The Related Clips Timeline summarizes retrieved clips across videos, with cells encoded by theirsemantic relevance to the query.

Publication Details

- Venue

- Computer Graphics Forum (Proc. EuroVis)

- Year

- 2026

- Publication Date

- April 6, 2026

- DOI

- https://doi.org/10.1111/cgf.70431

Abstract

Extracting actionable insights from long-duration urban videos is often labor-intensive: analysts must manually sift through raw footage to pinpoint target events or uncover broader behavioral trends. In this work, we present URBANCLIPATLAS, a visual analytics system for exploring long urban videos recorded at street intersections. URBANCLIPATLAS combines retrieval-augmented generation (RAG), taxonomy-aware entity extraction, and video grounding to support event retrieval and interpretation. The system segments extended recordings into short clips, generates textual descriptions with a vision-language model, and indexes them for semantic retrieval. A knowledge graph maps entities and relations from LLM answers onto a domain-specific taxonomy and aligns them with detected objects and trajectories to support visual grounding and verification. URBANCLIPATLAS supports scene retrieval through an augmented chat-based interface and improves scene interpretation by tightly aligning textual outputs with video evidence. This design strengthens the connection between textual reasoning and visual evidence, reducing the effort required to validate model outputs and refine hypotheses. We demonstrate the usefulness of URBANCLIPATLAS on the StreetAware dataset through two case studies involving hazardous scenarios and crossing dynamics at street intersections. URBANCLIPATLAS helps analysts reason about safety- and mobility-related patterns across large urban video collections.

Cite this publication (BIBTEX)

@article{2026-UrbanClipAtlas,

title={URBANCLIPATLAS: A Visual Analytics Framework for Event and Scene Retrieval in Urban Videos},

author={Joel Perca and Luis Sante Taipe and Juanpablo Heredia and Joao Rulff and Claudio Silva and Jorge Poco},

journal={Computer Graphics Forum (Proc. EuroVis)},

year={2026},

url={https://doi.org/10.1111/cgf.70431},

date={2026-04-06},

volume={45},

issue={3}

}