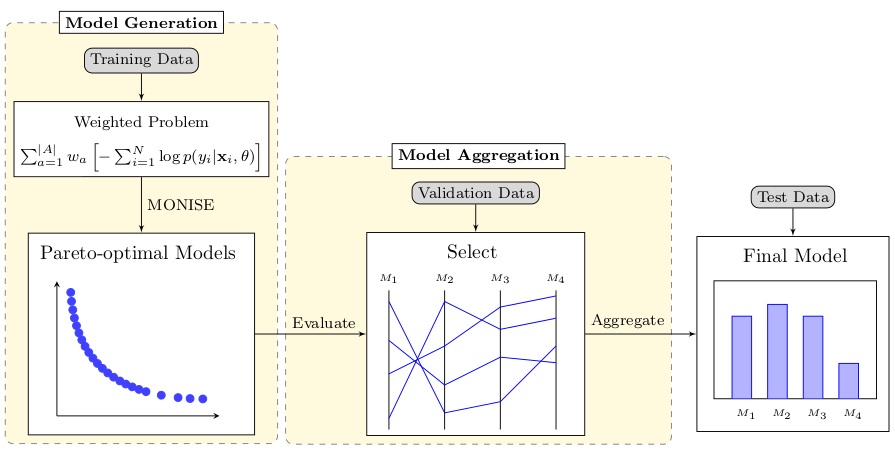

Visual description of the proposed methodology

Abstract

One of the main challenges of machine learning is to ensure that its applications do not generate or propagate unfair discrimination based on sensitive characteristics such as gender, race, and ethnicity. Research in this area typically limits models to a level of discrimination quantified by an equity metric (usually the “benefit” discrepancy between privileged and non-privileged groups). However, when models reduce bias, they may also reduce their performance (e.g., accuracy, F1 score). Therefore, we have to optimize contradictory metrics (performance and fairness) at the same time. This problem is well characterized as a multi-objective optimization (MOO) problem. In this study, we use MOO methods to minimize the difference between groups, maximize the benefits for each group, and preserve performance. We search for the best trade-off models in binary classification problems and aggregate them using ensemble filtering and voting procedures. The aggregation of models with different levels of benefits for each group improves robustness regarding performance and fairness. We compared our approach with other known methodologies, using logistic regression as a benchmark for comparison. The proposed methods obtained interesting results: (i) multi-objective training found models that are similar to or better than the adversarial methods and are more diverse in terms of fairness and accuracy metrics, (ii) multi-objective selection was able to improve the balance between fairness and accuracy compared to selection with a single metric, and (iii) the final predictor found models with higher fairness without sacrificing much accuracy.

BibTeX

@article{2023-EnforcingFairness,

title = {Enforcing Fairness using Ensemble of Diverse Pareto-Optimal Models},

author = {Vitória Guardieiro AND Marcos Medeiros Raimundo AND Jorge Poco},

journal = {Data Mining and Knowledge Discovery},

year = {2023},

url = {http://www.visualdslab.com/papers/EnforcingFairness},

}